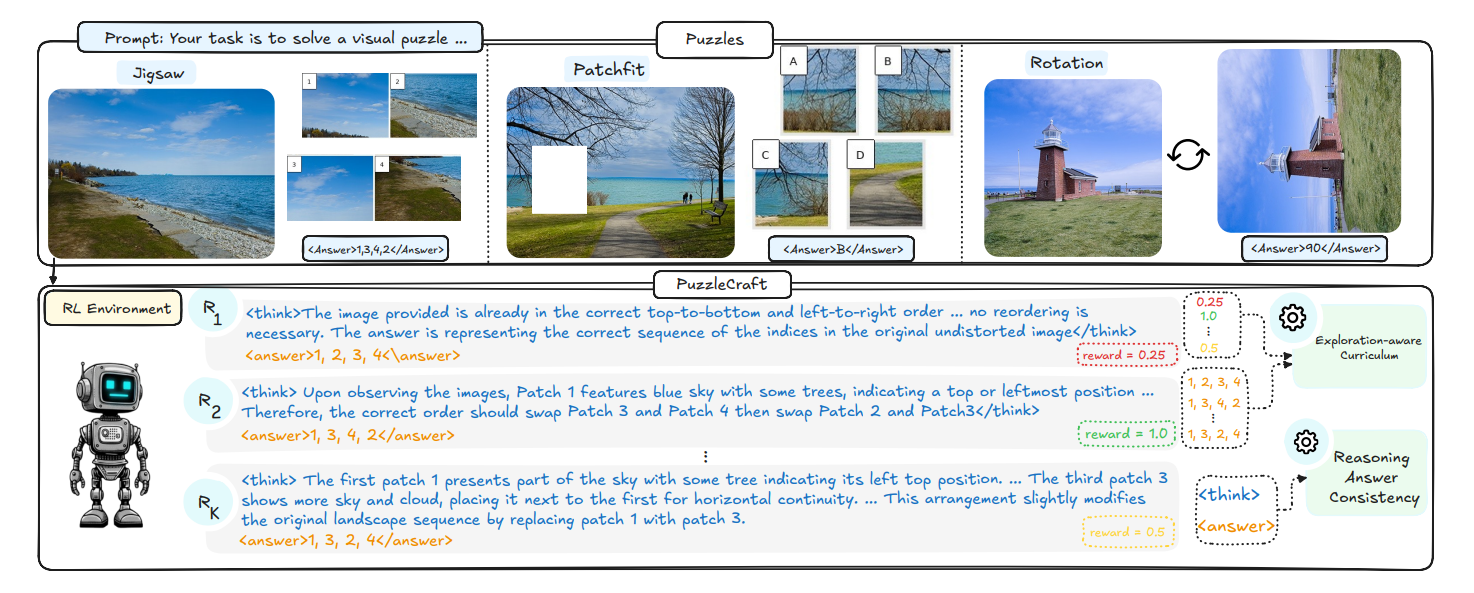

PuzzleCraft: Exploration-Aware Curriculum Learning for Puzzle-Based RLVR in VLMs

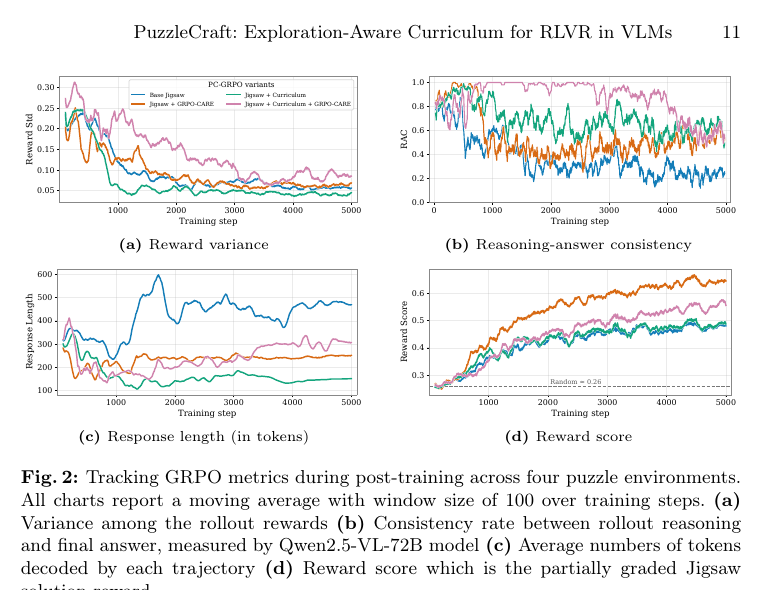

PuzzleCraft studies how lightweight visual puzzles, exploration-aware curricula, and reasoning–answer consistency can make puzzle-based RLVR more effective for vision–language models.